Series 9 Score Predictions: A Numbery-Wumbery Breakdown

Guest contributor Joshua Yetman offers his predictions on how each episode might fare.

With Series 9 just around the corner, the level of excitement amongst fans has soared to new heights. The anticipation is practically palpable for what is certainly one of the most highly anticipated series of Doctor Who in recent memory. I too share in this tidal wave of enthusiasm and anticipation, but not just because of the episodes. I, personally, am also very excited about the mass of statistics that this series will generate, from the community ratings, to the audience figures, to the AI figures, to the ‘Razza’ scores, to the divisiveness of each episode. It will provide a plethora of fascinating information, and, as your resident DWTV statistician, I will be there summarising it all each week.

However, even before the series begins, there are statistics to report upon – specifically, statistical projections for the series. Over the past few weeks, I have been building a large statistical model – which I affectionately refer to as the ‘Oswald Model’, in tribute to my favourite companion – with the sole intention of projecting the community ratings (i.e. the average score given by the community for an episode) for Series 9, based on historical data and trends. It has also created projections for the audience figures, but I have decided not to include those here, as they are less interesting.

In reality, community ratings are complicated statistics with potentially thousands of variables, most of which are completely unpredictable before the episode airs. Essentially, the Oswald Model works by only selecting a few of these variables (which will be listed in a moment), estimating these variables for each episode using historical data, and then combining all the relevant estimates for each episode together through a weighted average in order to create projections for that episode.

Is the model accurate? Well, the actual results in the coming weeks will determine that, but I like to think these projections are somewhat feasible. If anything, this is just for fun, in order to provide a more mathematical basis to our expectations this series, and see how close we can get to the real things!

(1) The methodology

First, we need to distinguish between two different kinds of rating:

- The initial rating – the average DWTV community score of an episode immediately after broadcast.

- The long-term rating – the average DWTV community score of an episode several months/years after broadcast.

Usually, the long-term rating is lower than the initial rating, due to the effect of “recent episode syndrome”, a phenomenon which suggests that initial ratings are pushed up through hype and post-broadcast emotions, factors which decline over time, leading to a lower long-term rating. This phenomenon was potentially identified during one of my analyses earlier this year.

Anyway, the “Rank the Revival” poll series in February and March this year provided a rating for every single episode of the revival. This has been a brilliant source of data! As it had been several months or years since broadcast for all these episodes, all of these ratings can be considered long-term. Using this data (but excluding specials for various reasons, and removing anything else that I feared may have skewed the final results), long-term rating projections were calculated using the Oswald Model, and then adjusted to create projections for the initial ratings as well.

To calculate the long-term rating projections, the following variables were taken into consideration for each episode:

- The episode writer – an episode written by a writer who has previously worked on the show should be of similar quality to his/her previous episode (in theory). New writers (i.e. Sarah Dollard and Catherine Treganna) have been modelled using the Series 8 average, seeing as they have no previous episodes to go on. Co-written episodes take both writers’ history into account.

- The position of the episode in the series (i.e. its episode slot) – investigations into episode slots earlier in the year have identified a ‘slot effect’, that there is a distinguishable and relatively congruent behaviour of ratings over each series, peaking at the opener and finale and wilting at Episodes 3 and 10. This effect is captured by this variable.

- Whether or not the episode is part of a double-parter – Series 9 will be comprised of a large number of double-part stories. As identified earlier in the year, double-parters tend to be better received (with the first part slightly better received than the second, on average) than single-parters, and so this variable takes this into consideration.

- Subjective expectations – this is a variable that should capture aspects of Series 9 that no other variable – which are all based strictly on historical data – can capture. Expectations based on filming articles, episode premises, casting details, etc., factor into this, and so this variable utilises as much future knowledge as we have access to. The data for this variable was collected from a small sample from the community (thanks to all those involved).

- The setting of the episode – it is believed that the setting of an episode – be it on Earth or another world (as far as we know) – has a material effect on ratings, as most people tend to voice their preference of episodes set on different worlds.

- The historic episodic quality of the 12th Doctor and Clara.

- The historic episodic quality of the Daleks (for Episodes 1 and 2 only).

Some of these variables were given higher weightings than others when they were combined to create projections for the Series 9 long-term ratings. However, we preferably want projections of the initial community projections (as initial ratings will be all that we’ll see for a while!), so another model (one which uses a line of best fit between the Series 8 initial ratings and the Series 8 long-term ratings) has been used to adjust long-term projections to initial projections.

(2) The results

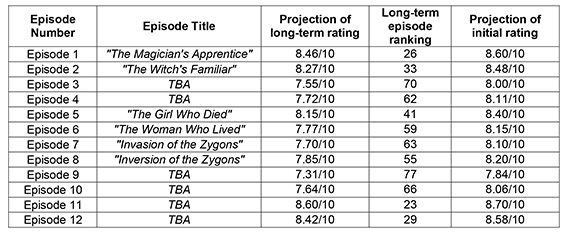

But anyway, without further delay, the official projections for the Series 9 initial and long-term ratings are:

These results are summarised in the following diagram:

The projections indicate a very strong series indeed! The highest projection is for the first part of the finale (Episode 11) followed by Episode 1. Given how amazing the ‘one-hander’ Episode 11 sounds, this projection sounds reasonable. The lowest projection is for Episode 9, followed by Episode 3. Despite how interesting and different Mark Gatiss’ episode this series looks, his track record is against him, and has severely reduced his projection. The shape of this graph closely follow that of most Moffat-era series, especially with the strong results of the opening episode, one of the most distinctive qualities of Moffat’s era.

The long-term series average is projected to be 7.96/10, a figure which includes the known long-term rating of Last Christmas. Excluding Last Christmas, the long-term episodic average is 7.95/10. In both cases, Series 9 is projected to be the highest rated series of the revival, with Series 5 pushed down into second place (with its long-term average of 7.78/10). The emphasis on double-parters, Moffat’s fairly extensive role in the series, the popularity of Peter Capaldi, and some fairly high subjective expectations have led to this projection.

Furthermore, the initial series average is projected to be 8.26/10, which again includes the known initial rating of Last Christmas. Excluding Last Christmas, this rises to 8.27/10. Records of initial series’ averages only exist for Series 8, which had an initial series average of 8.09/10, and so Series 9 is projected to be significantly higher than Series 8 once again.

The “long-term episode ranking” column above shows where each episode would rank in the 129 post-Series 9 episodes of the revival if it attained its projected long-term rating. These rankings seem rather ‘in the middle’, which is something we will come back to in a minute.

Although the model itself could be massively off the mark (which I will comment on later), I personally think the order of the series from best to worst (i.e. Episode 11 being best, Episode 9 being worst) is fairly reasonable, though there is always potential for deviations and surprises (and hopefully there will be lots!).

In addition, these projections strongly indicate that this will be the “Year of the Moff” (though most years tend to be!). Steven Moffat is projected to average 8.44/10 in Series 9 (excluding co-writes – including co- writes decreases this to 8.30/10) and his four self-written episodes are projected to top the series. This would obviously put Steven Moffat as the highest rated writer in Series 9. It would also be his strongest year as showrunner. Steven Moffat’s finale is also projected to be the highest rated Series 9 story (at 8.51/10) – this would make this finale the third best of the revival, if true.

In order to evaluate these results further, they have been compared to the normal distribution model calculated for the revived series in my previous analysis. I won’t go into detail about the methodology of this, but using this model, only one episode – Episode 9 – is projected to be worse than the current long- term revival average of 7.505 – all the rest are projected to be better! In fact, three episodes – Episodes 1, 11 and 12 – are projected to be in the top 20% of ratings, and are thus projected to be in the top 20% of the revival.

So, this all sounds rather amazing! However, like any decent statistician, I must comment on the limitations of this model, and the importance of stressing that these are all very big “ifs”.

(3) The limitations of the Oswald Model

Primarily, the Oswald Model is not suited to predicting ‘outlying’ episodes. An outlying episode is an episode with a very high or very low rating, which is very difficult to predict based on historical data, as historical data gives little indication as to when such episodes may occur. Furthermore, the Oswald Model bases its projections on weighted averages, which dilute any pre-existing outlying episodes in the source data.

Primarily, the Oswald Model is not suited to predicting ‘outlying’ episodes. An outlying episode is an episode with a very high or very low rating, which is very difficult to predict based on historical data, as historical data gives little indication as to when such episodes may occur. Furthermore, the Oswald Model bases its projections on weighted averages, which dilute any pre-existing outlying episodes in the source data.

Overall, this “centralises” the projections, meaning the standard deviation of the overall ratings is very low compared to most series (at 0.394, compared to Series 8’s standard deviation of 1.035). Remember, ‘standard deviation’ in this context is a measure of how consistent a series is – the lower the standard deviation, the more consistent the series is.

In short, the Oswald Model predicts a more consistent run of episodes than is actually likely, and several outlying episodes are likely to appear during the actual series. This is why the highest ranking is just 23rd in the previous table, and the lowest ranking is just 77th. In reality, the highest ranking will probably be higher, and the lowest ranking lower.

Furthermore, I personally feel Episodes 3, 6 and 10 have been under-projected by the model. In the case of Episode 3 and 6, I expected greater consistency with Episodes 4 and 5 (due to their story links, as 3-4 and 5-6 are double-parters…as far as we can tell) than what has been projected. And Episode 10, judging by Moffat’s comments, could be a stand-out episode of the series. But these are just subjective comments; the results speak for themselves, and are what history tells us to expect in the future.

(4) Conclusion

In conclusion, Series 9 seems set to be fantastic, but it again must be stressed that these are projections (and, I should add, only for the community– these are in no way predictions for a single individual’s ratings), and I expect some to be widely wrong. Like I said at the start, there are thousands of variables that determine the actual figures, and it’s only possible to consider a handful. In fact, I hope for some to be widely wrong (preferably underestimated than overestimated), as what fun would a series be if I could predict exactly how good it was going to be?

But I hope you found this article interesting and informative. Let’s hope Series 9 is as good as – if not better than – what my projections say!